Smarts, Artificial and Human

Monday, November 15, 2021

How much should you trust Alexa? Will you let it tell you what to do next?

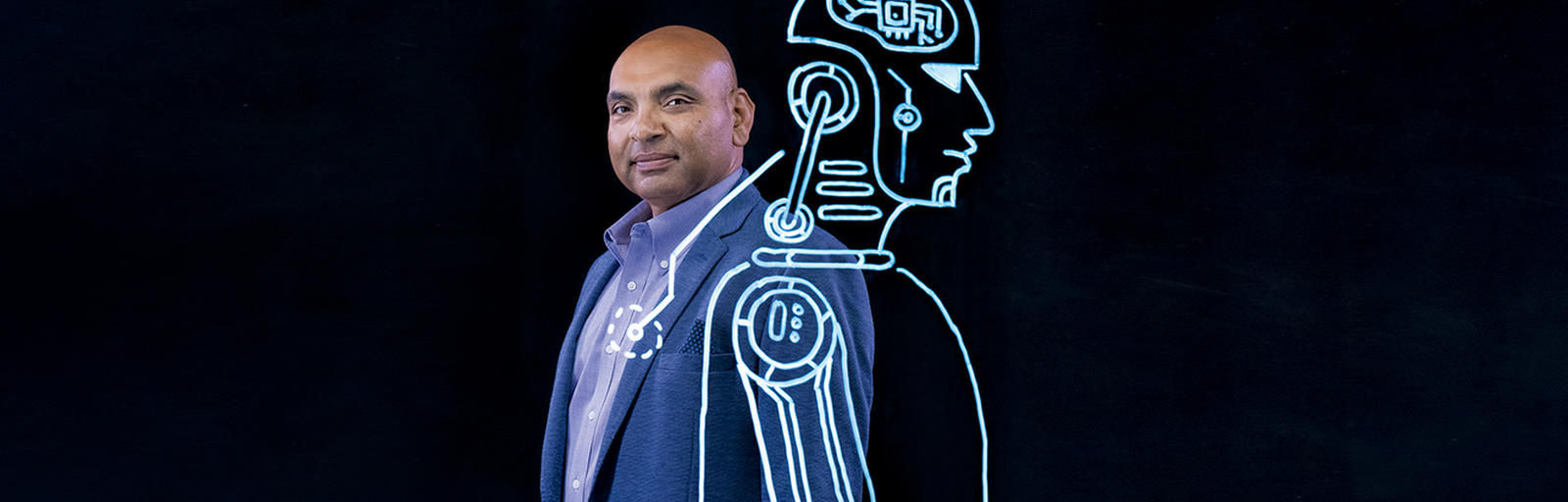

In a sense, these are questions Alok Gupta believes we all should be asking.

In two recent research papers, Gupta, the Curtis L. Carlson Chair in Information Management and the Carlson School’s Senior Associate Dean of Faculty and Research, tested how humans and machines work together. His findings regarding artificial intelligence (AI) demonstrate why humans shouldn’t rely too much on machines for making decisions.

In a paper published in the September issue of MIS Quarterly, Gupta provocatively asks whether “humans-in-the- loop will become Borgs.” The term “humans-in-the-loop” refers to hybrid work environments where humans and AI “machines” collaborate. A second paper, which will appear in a future issue of Information Systems Research, demonstrates that humans have cognitive limitations about their metaknowledge—that is, our knowledge or assessment about what we don’t know. This limitation makes it difficult for us to delegate knowledge work to machines, even when we should. Gupta co-authored both papers with Andreas Fügener, Jörn Grahl, and Wolfgang Ketter, all members of the University of Cologne’s Faculty of Management, Economics, and Social Sciences.

AI is no longer sci-fi. More and more businesses have incorporated AI and machine learning into their processes in order to make better decisions relating to contracts, supply chains, and consumer behavior. Scholars have been studying humans-in-the-loop work environments for some time--Gupta himself has conducted research in this area. But he notes that studies have tended to focus “on short-term performance and not what happens in longer-term decision-making processes.”

As these papers show, those longer-term effects aren’t all positive. In fact, “simulation results based on our experimental data suggest that groups of humans interacting with AI are far less effective as compared to human groups without AI assistance,” Gupta says.

Where reliance on AI-assisted decision-making can be particularly deficient is in the development of new solutions that can generate genuine innovation. “Humans have an uncanny ability to connect the seemingly unconnected dots to come up with solutions that are not generated by linear thinking,” Gupta notes. “Most innovations occur when humans face challenges in their day-to-day environments. The more humans are removed from any environment, the more unlikely it will become to innovate in that particular space or to make accidental discoveries.”

“We need to ‘teach’ future knowledge workers...how to assess their own limitations...so that they can effectively work with AI-based machines.”

In other words, by relying too much on AI for decision-making, we are in danger of surrendering our greatest strength—the capacity to see things in our own unique ways. We also risk not being able to leverage the insights that other people can provide.

On the other hand, the co-authors’ newest paper notes that being unaware of what we don’t know limits AI’s usefulness. This fundamentally limits how well we can collaborate with AI. There are times when humans need to, in a sense, “defer” to the machines. The authors’ research shows that humans and AI improve their collaborative performance when AI delegates certain decision-making tasks to humans, not the other way around.

All this certainly doesn’t mean AI isn’t a highly useful tool. However, the rush to adopt AI-based technologies to replace or augment human work has its perils. An approach to human-AI interaction that Gupta and his co-authors recommend is what they call “personalized AI advice.” This means designing an AI system that can observe an individual’s decision-making and assess where its “advice” falls short, based on an individual’s decision patterns. “It can then set an appropriate level of advice that optimizes the joint performance of the human plus AI while retaining human individuality and uniqueness,” Gupta says.

“The implication of our work,” he adds, “is that we need to ‘teach’ future knowledge workers not just about new competencies but also about how to assess their own limitations in those competencies so that they can effectively work with AI-based machines.”

As Gupta notes, “there has been a lot of talk about AI taking over jobs. Many researchers feel that this estimate is overblown.” Human creativity and insight will always be necessary. There’s a great deal AI can and will do to help us make better decisions. But as Gupta’s work suggests, we humans (and not Alexa) should have the final say, using our distinctive capacity for judgment.

“Will Humans-in-the Loop Become Borgs? Merits and Pitfalls of Working with AI”

Fügener, A., Grahl, J., Gupta, A., and Ketter, W., Management Information Systems Quarterly (MISQ), (July 2021 )

“Cognitive challenges in human-AI collaboration: Investigating the path towards productive delegation”

Fügener, A., Grahl, J., Gupta, A., and Ketter, W., Information Systems Research, (Forthcoming)